All products featured are independently chosen by us. However, SoundGuys may receive a commission on orders placed through its retail links. See our ethics statement.

The ultimate guide to Bluetooth headphones: LDAC isn't Hi-res

August 13, 2024

Sony’s LDAC has risen to popularity as the audiophile-grade Bluetooth codec, owing to its substantially higher 990kbps bit-rate versus other codecs — and the promise of 24-bit/96kHz Hi-Res playback. Unfortunately, Sony has rarely given out much information about exactly how LDAC achieves this. We’re here to see if LDAC really delivers on the hype, or if the industry has been enjoying another audio placebo.

Editor’s note: this article was updated on August 13, 2024, for formatting, timeliness, and data updates.

What is LDAC?

LDAC is a Bluetooth codec that currently is the go-to option for high-end headsets to lean on for higher-bitrate audio over wireless. While it’s not currently possible to completely match CD quality over wireless, LDAC makes a really good effort to provide that by making use of creative compression and higher bitrates than are available with other codecs.

Though the codec is on the “newer” side compared to others like AAC and SBC, it has been a mandatory option on Android phones since Android 8.0. Ever since, the codec has seen its use grow on the back of the success of the WH-1000X-series headphones. However, Apple devices do not support the codec, opting to stick with SBC and AAC instead. If you have an iPhone, you’re not using LDAC.

Sony makes two major claims about LDAC. First, that its 990kbps top speed can maintain the maximum bit depth and frequency of 24-bit/96kHz Hi-Res audio files. Secondly, that the codec can transmit 16-bit/44.1kHz CD quality files completely untouched.

The company also makes smaller statements about LDAC’s lower bit rate settings, namely that its 330kbps setting “achieves the higher sound quality than conventional codecs, even in a bad connection environment.” All are claims that are worthy of some scrutiny beyond blind acceptance. Our testing found that:

- LDAC, like all Bluetooth codecs, simply isn’t capable of passing Hi-Res content unaltered, and falls short of wired 24/96 equivalents — but it still does pretty well.

- The 990 and 660kbps bit rates are roughly as good as CD quality, but quickly lose fidelity above 20kHz.

- Smartphones rarely pick the 990kbps option when connecting to LDAC equipment.

- For a highly stable connection, aptX and even SBC are better choices than LDAC 330kbps.

Frequency response up to 96kHz?

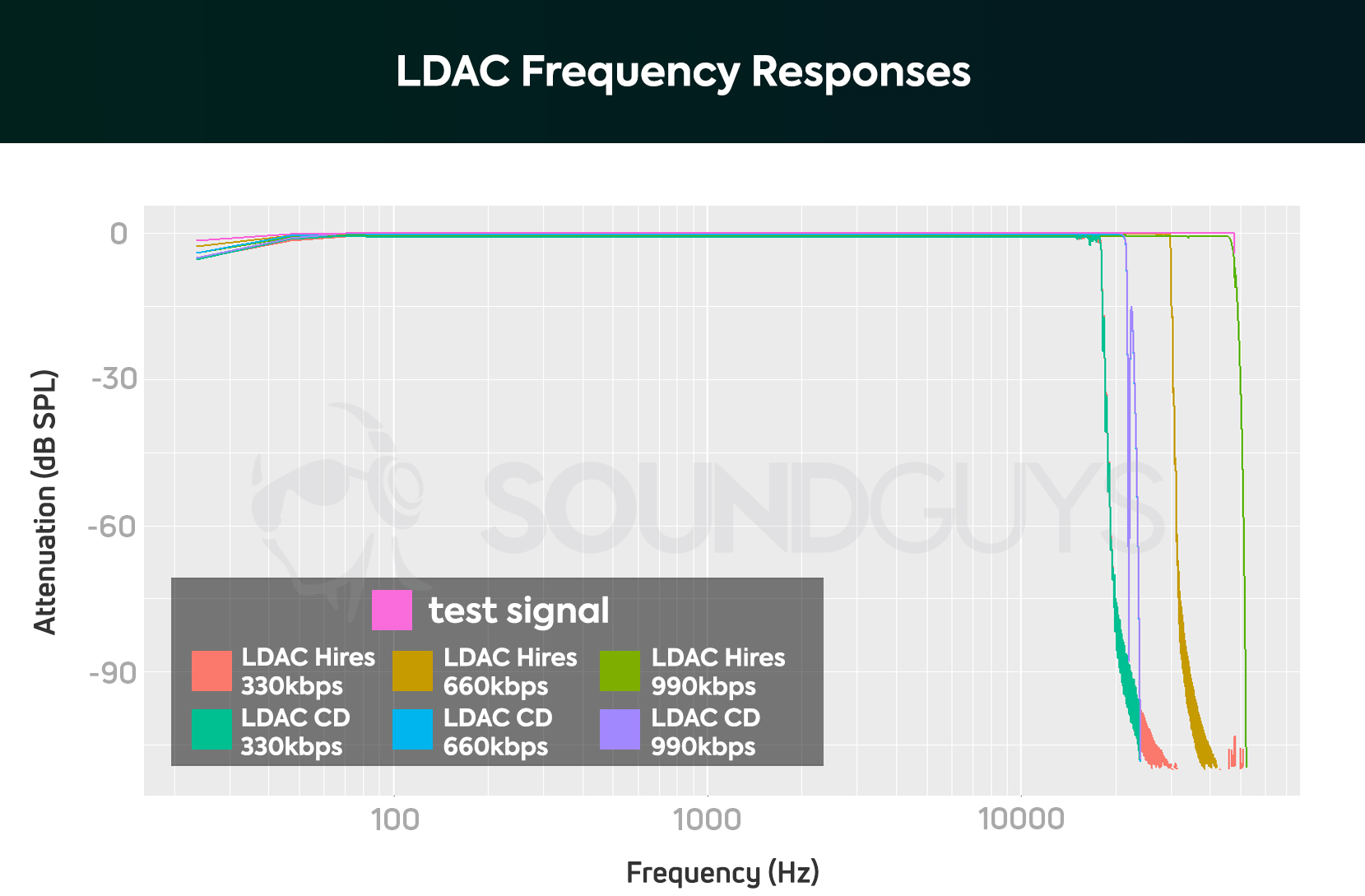

The first item we put to the test is LDAC’s frequency response. In Hi-Res mode, the codec should pass audio data up to 48kHz, and in CD mode should reach 20kHz untouched. If you want to configure these settings yourself, you can find them in the Developer Settings of your Android smartphone.

Here are the results:

First the good news: The 990kbps setting works as advertised. The codec transmits audio right up to 47kHz before slowly rolling off, granting it Hi-Res status. The 660kbps mode rolls off incredibly steeply at 30kHz, making it an odd middle ground between CD and Hi-Res — but that’s more than enough for most people. Both codecs roll-off at 21.5kHz when in CD mode, which is pretty much bang on the money.

However, LDAC’s 330kbps setting falls short of CD quality regardless of which modes it’s in. With a very steep, high ripple filter occurring just before 18kHz. There’s isn’t much audible content above this frequency and most people’s ears can’t go any higher, but some concessions have clearly been made to make LDAC work at this low bit rate.

The two major takeaways so far: only LDAC 990kbps matches the frequency criteria for Hi-Res and LDAC 330kbps is worse than CD quality.

Sony’s claims that LDAC achieves better sound than conventional codecs is suspect based on this data point. When testing aptX and regular SBC we found that these codecs extend right the way up to 19kHz before beginning a smooth roll-off, reaching just -6dB at 20kHz.

The two major takeaways so far: only LDAC 990kbps matches the frequency criteria for Hi-Res and LDAC 330kbps is worse than CD quality in terms of the measured frequency spectrum.

Noise floor and distortion

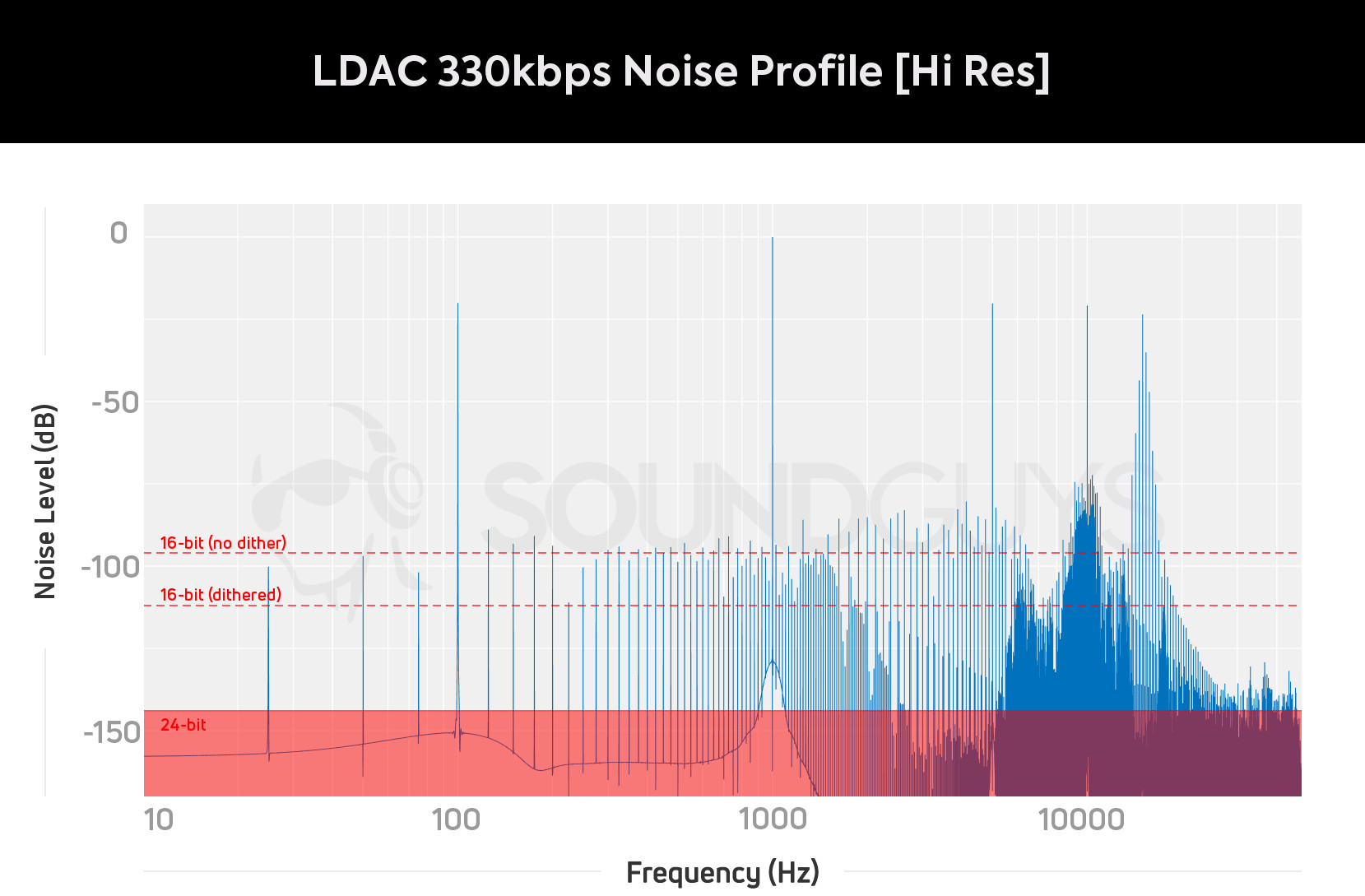

Frequency response is just one part of the quality equation and technically anything around 20kHz is good enough to match human perception. Codecs also mess with noise floor and bit depth to save on bit rate, and compressing files too much can lead to distortions and objectionable artifacts. Needless to say, any Hi-Res claims won’t match up if LDAC takes shortcuts here.

When someone says “CD quality,” they usually mean that it meets certain performance thresholds. For example, a CD contains music with a bit depth of 16. This gives us a theoretical noise floor of -96dB, while adding something called “dithering” improves the noise floor to somewhere around -112dB in the audible spectrum. Hi-Res 24-bit offers far less noise, and -124dB is the practical limit for even the best recording devices. For clarity, we’ve marked these limits on our graphs. If noise exceeds these limits, the codec technically cannot claim “CD-quality.”

First, let’s look at how the three LDAC quality options compare when tackling Hi-Res content.

For starters, 990kbps clearly does raise the noise floor compared with the full 24-bit Hi-Res -144dB target, but not linearly. The noise floor appears to go as low as -130dB below 500Hz before clocking in at around a very respectable -116dB right up until about 15kHz, making this better than what we might expect from even a dithered CD. We can also note that the noise floor increases at high frequencies above 15kHz, reaching highs of -98dB. Certainly not what you would see with wired Hi-Res playback, but this isn’t going to be an issue in the real world. Did you spot that the noise floor seems to fall again after the last spike? We’ll explore this a little more in a minute.

The 660kbps encoded signal is clearly noisier at low frequencies and the noise floor starts to increase a little sooner. The result hits about -110dB at 2kHz, rising to -102kHz above 5kHz and then scaling up to -74dB at around 15kHz. Again this is very decent for the most sensitive parts of human hearing (below 8kHz), but there’s a risk that higher frequency details may start to be masked by the noise. LDAC employs a similar noise floor scaling idea to aptX and regular SBC, but this isn’t really a true Hi-Res option.

Unfortunately, LDAC’s 330kbps setting has noticeably worse quality. The noise floor increases substantially to a not-unreasonable -90dB at low frequencies, hitting -80dB between 1kHz and 5kHz, -72dB around 10kHz. At 15kHz the result peaks at -35dB, which will definitely interfere with the high-frequency presentation. Regular Bluetooth SBC is nowhere near this bad, and this is by far the worst high-frequency performance I have seen from any Bluetooth codec.

LDAC's Hi-Res marketing does not stack up, and the 330kbps setting is worse than SBC.

Speaking of high-frequency content, let’s loop back to the point about noise floor. A second test throwing more high-frequency harmonics into the mix seems to stimulate LDAC into action above 20kHz. In other words, the codec appears to save on bandwidth by ignoring frequency bands that don’t contain any content, much like MP3 and AAC. It makes sense: why on Earth would you care about stuff that isn’t getting sent over anyway?

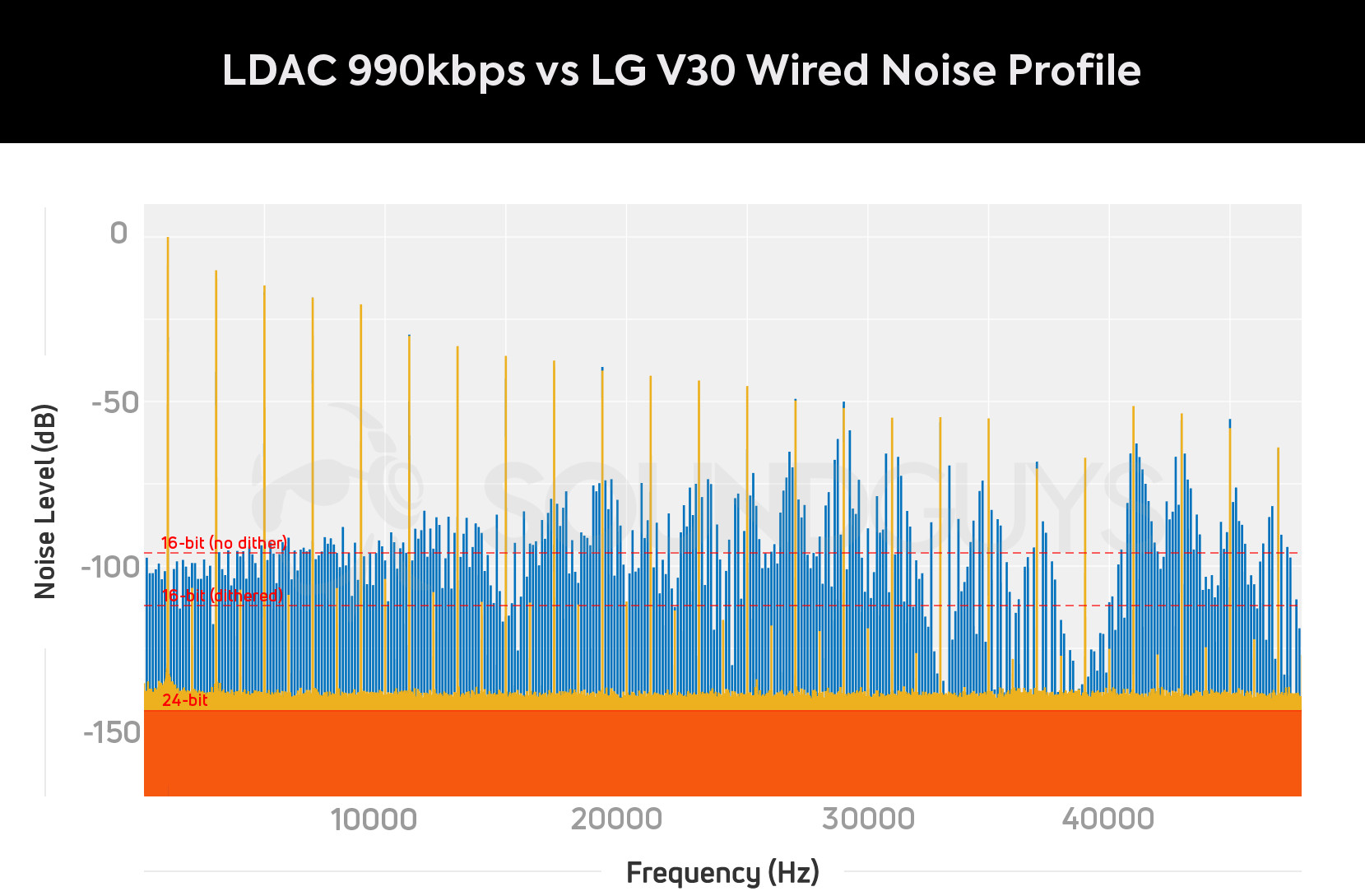

We see that the noise floor increases even further at higher frequencies. By 15kHz, the results are notably worse than CD quality, let alone Hi-Res. The graph above compares the result to the ESS SABRE 9218P DAC output from the LG V30+ smartphone. Although the V30+ suffers from some notable harmonic distortion, we can clearly see a constant noise floor that’s substantially quieter than Sony’s LDAC. Even at 990kbps, LDAC is a long way off matching the capabilities of 24-bit/96kHz wired equivalents.

For 660kbps, the results again appear very similar. The noise floor creeps up more much noticeably for high frequencies above 15kHz, and is certainly worse than CD quality here. With 300kbps there’s generally high noise overall, and again we can see the setting’s steep roll-off at 18kHz.

Hi-Res offers content above 20kHz, yet LDAC suffers from diminishing fidelity at these high frequencies. It's much better as a CD quality codec — except for the 330kbps setting which is poor by all measured criteria.

Finally, here’s the performance when the codec is set into CD quality mode.

The story is much the same as with Hi-Res, but the noise floors for 990 and 660kbps are slightly worse here because of the limits of our 16-bit test files. Both 990 and 660kbps offer a noise floor of about -112dB (within a margin of error), right around what we’d expect for a properly-dithered CD track. There’s a slight uptick in noise 990kbps’ noise floor above 15kHz, but at -100dB it’s not an issue. Again the 660kbps shows a notable increase in the noise floor above 10kHz, starting at -85dB and reaching -65dB near cut-off.

660kbps LDAC is a close match to aptX HD. LDAC 660kbps comes out ahead at most frequencies tested, but the latter manages to keep its very high-frequency noise floor lower. 990kbps does the best overall, but high-frequency content up to 8kHz is unlikely to be perceptibly different at real-world listening volumes.

At CD quality, LDAC 990kbps and 660kbps are a touch better than aptX HD, yet both require even more bandwidth.

When set to CD quality, 330kbps LDAC is much the same as before. The noise floor profile fits into the same margin of error, stuck in the region of -83dB for low frequencies, -75dB in the mids, and as high as -33dB near the cut-off frequency. It performs worse than aptX and regular Bluetooth SBC at all frequencies, yet all use similar bandwidths.

LDAC is only as good as its connection strength

A quality Bluetooth codec is only as good as its connection strength. We’ve all experienced those irritating skips, and the risk of missed packages increases substantially as bandwidth requirements increase. Hence why Sony’s codec scales between 990 and 330kbps, to either prioritize quality or a stable connection. The important question is what can consumers actually experience in the real world.

Out of the box, LDAC defaults to its “Best Effort (Adaptive Bit Rate)” option, which will pick 330, 660, or 990kbps based on the strength of your connection. For starters, let’s test which mode a number of smartphones default to when connecting to our LDAC test gear at just an arm’s length away.

| Phone | LG V30+ | Samsung Galaxy Note 8 | Huawei P20 Pro | Huawei P20 | Google Pixel 3 XL | Google Pixel 3 |

|---|---|---|---|---|---|---|

| Phone LDAC 'Best Effort' Setting | LG V30+ 990kbps | Samsung Galaxy Note 8 660kbps | Huawei P20 Pro 660kbps | Huawei P20 660kbps | Google Pixel 3 XL 330kbps | Google Pixel 3 330kbps |

Out of the phones we tested, only the LG V30+ defaulted to 990kbps. Other phones tend to start at the 600kbps option, lowering their quality if the connection worsens. The new Google Pixel 3 and 3 XL review units were stuck on 330kbps, so perhaps better support is coming in the future. The bottom line is: it’s quite unlikely that your phone will opt for 990kbps LDAC unless you manually force the settings via the developer options.

660kbps appears to be LDAC's most common 'Best Effort' setting. Many phones have to manually force 990kbps.

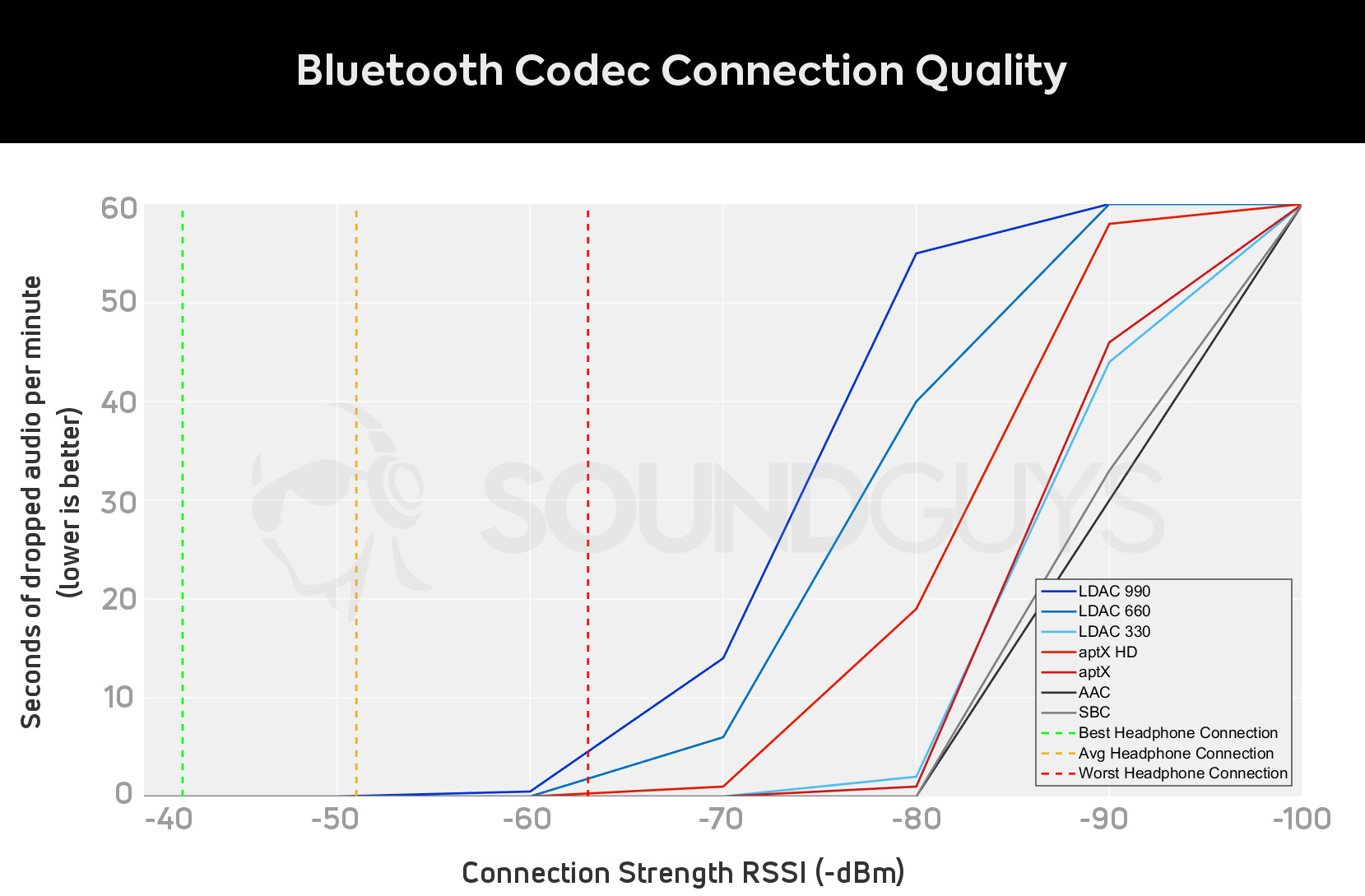

This graph plots a rough guide to the seconds of audio dropped (or skipped) as the Bluetooth signal quality worsens. As a ballpark, a Received Signal Strength Indication (RSSI) of higher than -60dBm guarantees real-time high bandwidth data transfer, -70dBm requires a lower bandwidth, and a connection weaker than -80dBm isn’t enough for real-time data. These are reflected quite nicely in our results.

Most codecs (including SBC, AAC, and LDAC 330) only begin to drop packets at around -80dBm, meaning that some significant interference or distance is required before they drop out. LDAC’s 990 and 660kbps settings cut it very fine, requiring a very strong connection to avoid the occasional stutter. It’s no wonder why the 990kbps setting is rarely used, as there’s a chance of stuttering just below -60dBm, making a reliable connection difficult. Qualcomm’s aptX HD offers a little more headroom, but it’s still susceptible to interference.

The graph also plots the best, worst, and average RSSI for a selection of Bluetooth headphones, measured from the pocket to ear. Unsurprisingly, our worst performers are true wireless earbuds that hit -63dB. Radio waves struggle to traverse across the body, so even putting your hand in your pocket can reduce signal quality.

990kbps and 660kbps data rates push Bluetooth to the limits of stability.

We also took a look at the Sony WH1000XM2 and WH1000XM3, both LDAC wireless Bluetooth headphones. The average connection strength is about -51 and -49dBm respectively, with lows ending up closer to -60dBm when arms and hands get in the way. In other words: Sony headphones sport connections fast enough for LDAC’s highest quality settings, but don’t offer a lot of headroom to guarantee a stutter-free experience in less-than-ideal environments. In those situations, the codec will always fall back to its 330kbps setting.

Sony’s LDAC is not really Hi-Res, but that’s okay

That’s a lot of data to take in, but the bottom line is that Sony’s LDAC technology doesn’t technically provide true Hi-Res audio over Bluetooth — but it does get pretty close in some contexts. Technically, the 990kbps version of the codec reaches all the way to 48kHz (and is the only codec able to do so). However, its resolution and noise floor are nowhere near 24 bits, and are worse than 16 bits above 15kHz. Given that most peoples’ hearing diminishes starting at the high frequencies, it’s entirely possible that many won’t notice the difference.

Compared to the capabilities of a wired connection, LDAC is certainly not Hi-Res. But whether you'll hear the difference is another matter.

Actually, using LDAC’s highest-quality settings can be tough, depending on your setup and listening environment. Most smartphones I tested default to 660kbps or lower in good conditions, but that’s not a guarantee. The only way for consumers to change this is to dive into Android’s developer settings, hidden away in the operating system’s menus. Furthermore, the codec pushes Bluetooth’s data speeds to such limits that reliable connections for “Hi-Res” are far from guaranteed. Even 660kbps will struggle in less-than-ideal environments.

Ultimately, LDAC users will likely spend a fair bit of time listening to the 330kbps version. Unfortunately, the available resolution and 18kHz cut-off frequency are objectively inferior to CD quality, Qualcomm’s aptX, and SBC.

Frequently asked questions

A CD (or equivalent FLAC / lossless file) is 2 channels of 16 bit samples at a sampling frequency of 44.1 kHz, producing a data rate of 2 x 16 x 44100= 1,411 kbps, which is is higher than LDAC’s highest data rate, which is 990kbps.

Therefore, some data (almost a third) must be thrown away, and LDAC cannot be considered lossless, although it is the closest you can currently get over a Bluetooth connection.

No. The Apple iPhone does not support LDAC.