All products featured are independently chosen by us. However, SoundGuys may receive a commission on orders placed through its retail links. See our ethics statement.

High bitrate audio is overkill: CD quality is still great

Everybody wants great audio, but sometimes our quests for improvement lead us down some really dark and… dumb… corridors. As it is with many disciplines, with music a little knowledge goes a long way. You may have seen discussions online surrounding bit depth and sample rates, but what you probably don’t know is that there isn’t some magic setting that’ll make everything sound better. That’s because digital music as it is today has already left our perceptual limits in the rear-view mirror. You don’t need crazy-high-quality files unless you’re creating music that needs heavy editing.

While I’m no stranger to delivering bad news, like any good journalist, I show my evidence. The truth of the matter is that humans just can’t perceive the difference between files at a certain point, and you shouldn’t get sucked into the marketing hype if it’s more expensive than what you have already. While I have no doubt that formats like MQA are technologically impressive, most won’t really be able to appreciate the increased fidelity. Chances are near 100% that your current library is perfectly fine.

Editor’s note: this article was updated on October 1, 2025, to remove outdated information.

What is bitrate?

Put simply, the bitrate of an audio file or stream is the amount of data transferred per second. Of course, that doesn’t tell us what the bit depth or sample rate is, but in broad terms the more data a file has, the more information is stored within. However, this doesn’t necessarily communicate the more important aspects of a digital file: bit depth and sample rate.

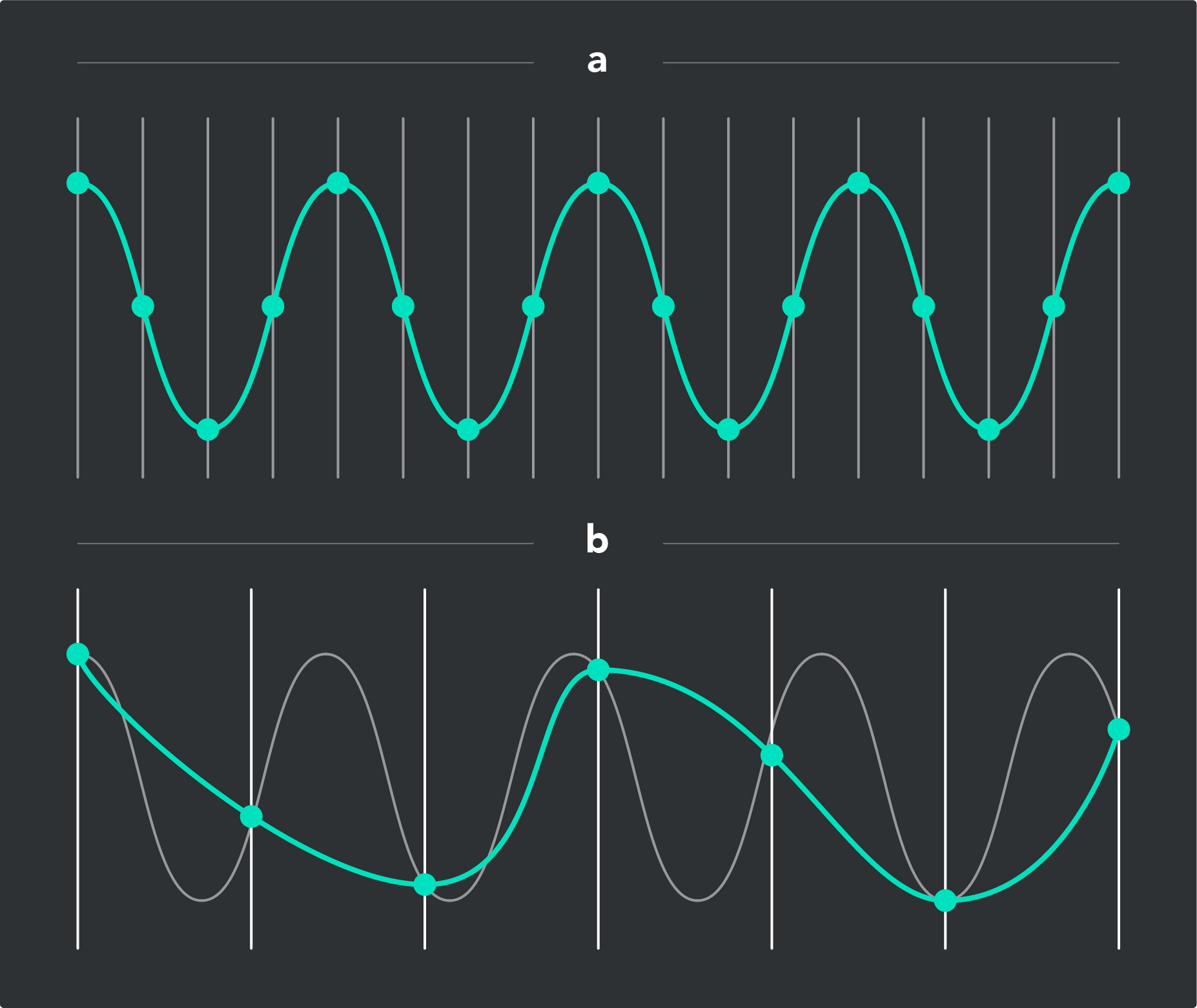

When a song is digitally transcribed into a digital signal, it is sampled at a set rate of periods per second (sample rate), with a certain amount data (bit depth) per sample. You can then take these figures and find the theoretical maximum bitrate — the higher the bit depth and/or sample rate of the file, the greater the bitrate. For example, a CD uses a 16-bit signal that’s sampled at 44.1 thousand times per second (kHz). The bitrate for such a file would be 1,411kbps if it wasn’t compressed in any way.

However, some MP3 and other compressed digital files are able to get away with much smaller bitrates by compressing the audio. For streaming services to work, for example, codecs with lower bitrates (eg, Opus, AAC) are prized for their ability to get you the tunes you want without exploding your data charges when you listen from your phone. Ever since the MP3 hit the scene, more and more effort has been expended to shave bitrates without negatively affecting audio quality.

Those chasing audio quality have the opposite impulse: higher bitrates, sample rates, and bit depths should be better for quality, right? Many hi-res audio files for sale online offer this, but is the file really that much better, or what’s going on?

You only need a sample rate of 44.1kHz

If you’ve looked at your music player’s information tab, you may notice some of your songs have sample rates of 44.1kHz or 48kHz. You may also notice that your DAC or phone supports files with sample rates up to 384kHz.

That’s overkill. Nobody on God’s green Earth is going to know or care about the difference, because our ears just aren’t that sensitive. Don’t believe me? It’s time for some math. To understand what the limit of human perception is for sample rates, we need to identify three things:

- The limit of frequencies that you can hear

- What’s the minimum sample rate needed to meet that range (2x highest audible frequency in Hz)

- Does the sample rate of your music files exceed that number?

Sounds simple enough, and it is. The most common range of human hearing tops out at about 20kHz, which is 20,000 periods per second. For the sake of argument, let’s expand that range to the uppermost limits of what we know is possible: 22kHz. If you want to check out the limits of your hearing, use this tool to find the upper limits of your perception. Just be sure you don’t set the volume too loud before you do it. If you’re over 20, that number should be about 16-17kHz, lower if you’re over 30, and so on.

If your hearing can't reach anything higher than 22.05kHz, then the 44.1kHz file can out-resolve the range of frequencies you can hear.

Using the Nyquist-Shannon sampling theorem, we know that a sample rate that provides two samples per period is sufficient to reproduce a signal (in this case, your music). 2 x 22,000 = 44,000, or just under the 44,100 samples per second offered by a 44.1kHz sample rate. Anything above that number is not going to offer you much improvement because you simply can’t hear the frequencies that an increased sample rate would unlock for you.

Additionally, the frequencies you hear at the highest end diminish over time as you age, get ear infections, or are exposed to loud sounds. For example, I can’t hear anything above 16kHz. This is why to older ears, music has less audible distortion if you use a low-pass filter to get rid of sound that you can’t hear — it’ll make your music sound better even though it’s not technically as “high-def” as the original file. If your hearing can’t reach anything higher than 22.05kHz, then the 44.1kHz file can handily outresolve the range of frequencies you can hear.

16-bit audio is fine for everyone

The other audio quality myth is that 24-bit audio will unlock some sort of audiophile nirvana because it’s that much more data-dense, but in terms of perceptual audio any improvement will be lost on human ears. Capturing more data per sample does have benefits for dynamic range, but the benefits are pretty much exclusively in the domain of recording.

Though it’s true a 24-bit file will have much more dynamic range than a 16-bit file, 144dB of dynamic range is enough to resolve a mosquito next to a Saturn V rocket launch. While that’s all well and good, your ears can’t actually hear that difference in sound due to a phenomenon called auditory masking. Your physiology makes quieter sounds muted by louder ones, and the closer they are in frequency to each other: the more they’re masked out by your brain. With enhancements like dithering, 16-bit audio can “merely” resolve the aforementioned mosquito next to a 120dB jet engine takeoff. Still dramatic overkill when you consider that a comfortable listening volume is usually something like 75dB.

However, it’s the quieter sounds that many audiophiles claim is the big difference, and that’s partially true. For example, a wider dynamic range allows you to raise the volume farther without raising audible noise, and that’s the big sticking point here. Where 24 and even 32-bit files have their place in the mixing booth, do they offer any benefit for MP3, FLAC, or OGG files?

Hey kids, try this at home!

While my colleague Rob at Android Authority already proved this with an oscilloscope and some direct explanations, we’re going to perform an experiment that you can do yourself — or just read if you don’t mind spoilers. After scouring the web, I found a couple files on Bandcamp that were actually released in 24-bit lossless files. Many of the ones I found on purported “HD Audio” sites were simply upconverted from 16-bit, meaning they were identical in every way but price. Next, I followed this procedure:

- Make a copy of the original 24-bit file

- Open in your audio editing program of choice (I suggest Audacity), and invert the file; save as 16-bit/44.1kHz WAV

- Open both the parent file and your newly-edited file, and export it as one track

- Open the mixed-down track in any program that allows you to view what’s called a spectrogram

- Giggle to yourself at spending a lot of money on Hi-res audio

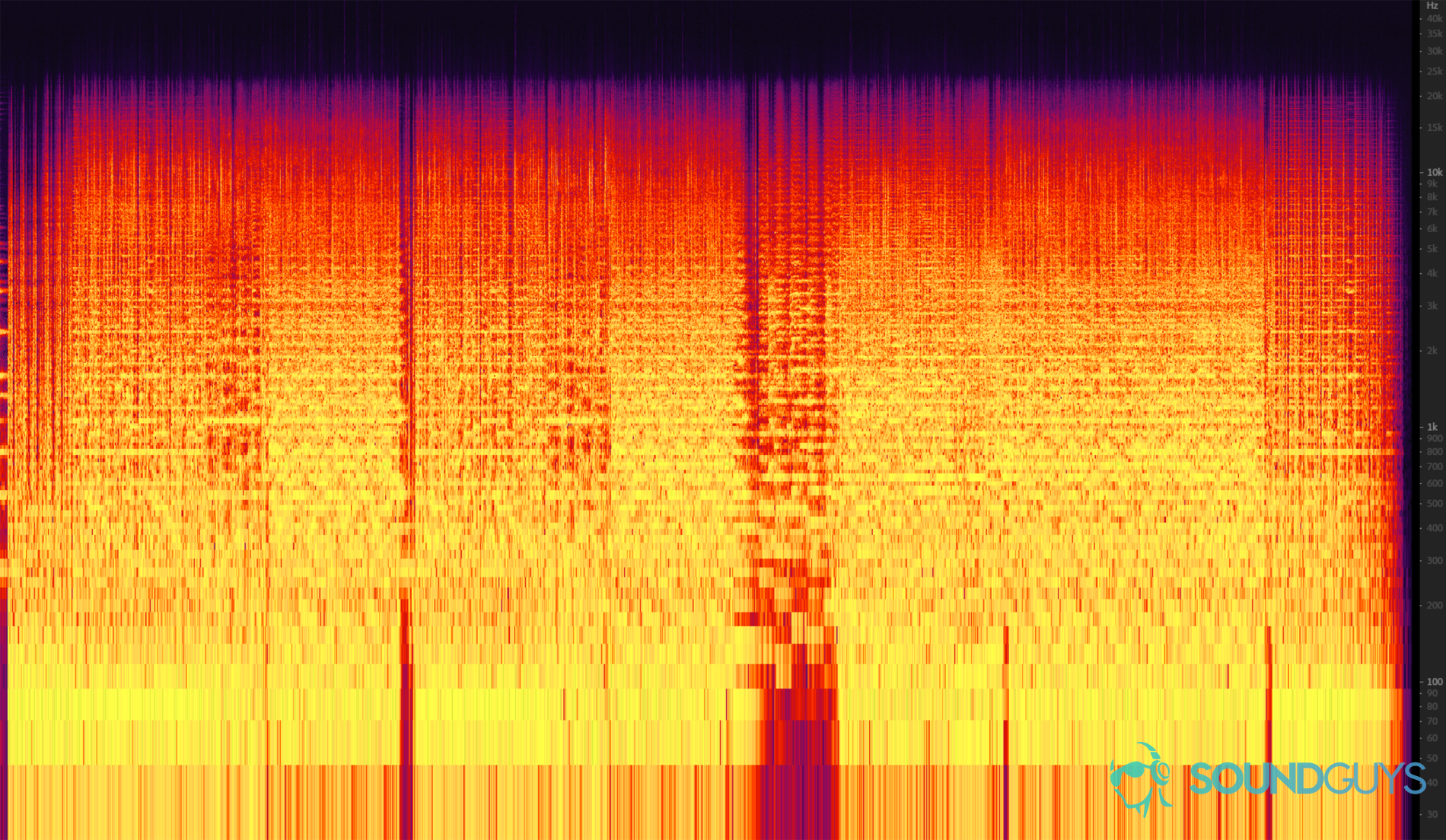

Essentially what we just did here is take a 96kHz/24-bit file, then subtract all the data that you can hear in a CD-quality version of itself. What’s left is the difference between the two! This is the exact same principle that active noise canceling is based on. This is the result I got:

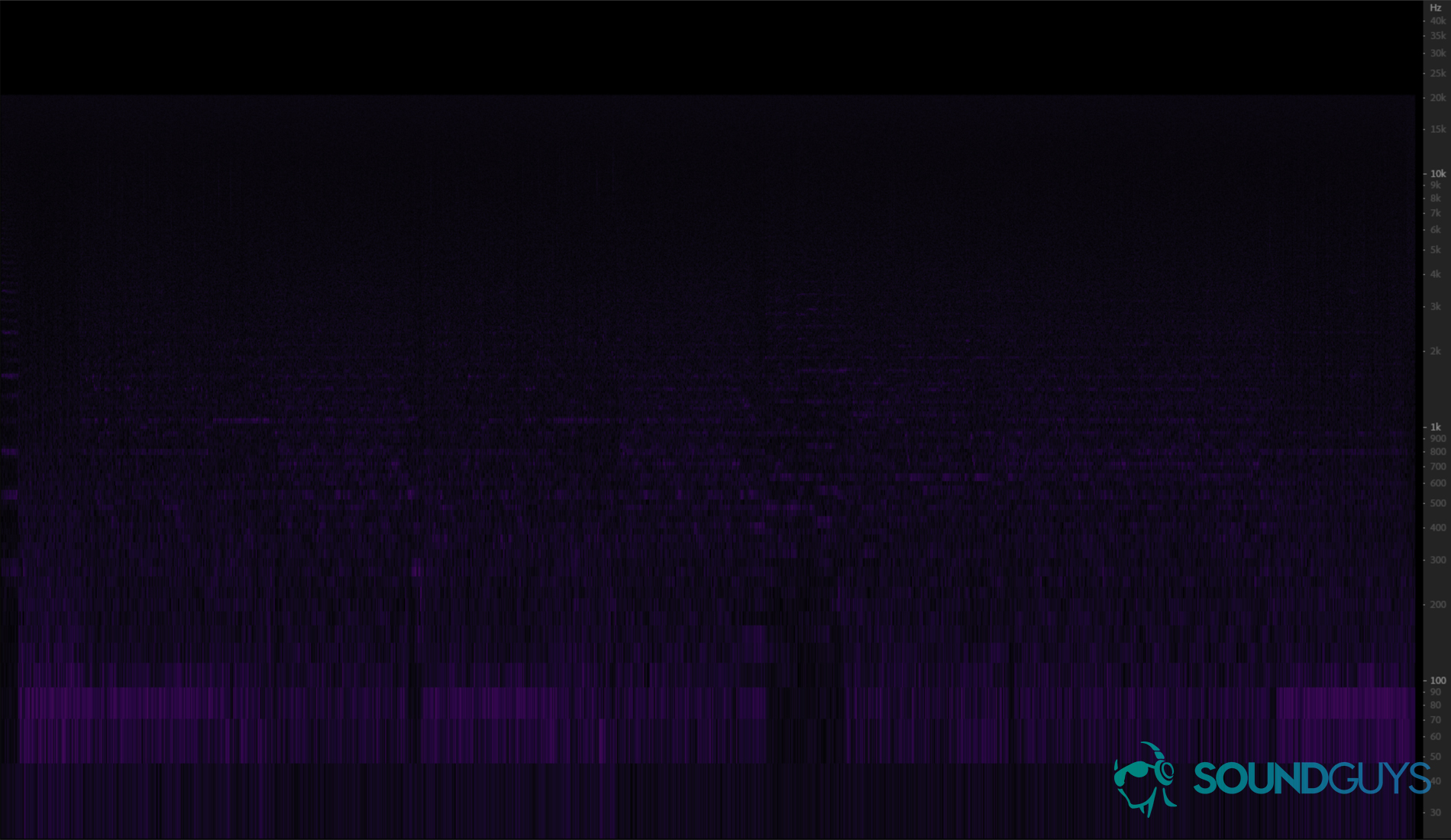

Okay, so there’s a bit of difference in the uppermost reaches of the file, but that’s out of the range of human hearing. In fact, you should probably just filter that out anyway. So let’s show what a human can actually hear by applying a low pass at 20kHz just to cover our bases. Et voila: a final peak of… -85dB at best. Okay, we’re kinda skirting the edges of audibility here, but here’s the problem — in order to actually hear any of this extra data, you need to:

- Be listening to music at a level that’s unsafe to listen to for more than 1 minute (96+dB)

- Have microphones for ears

While that last point may seem a bit snarky, we know that your brain filters out sounds that are close in frequency to each other. So when you’re listening to music, you’re actually not hearing all the sound at once, you’re just hearing what your brain has separated out for you. So in order to hear the difference between 24-bit/96kHz files and CD-quality audio: the individual sounds can only occupy a very narrow frequency range, be very loud, and the other notes that occur in the same time period must be vary far apart in terms of frequency.

There is no safe listening level to hear the difference between these files.

If we’ve learned anything from this Yanny/Laurel fiasco, a human voice does not fit these criteria (Editor’s note: It’s “Laurel”). So, really, the most likely places you’d actually be able to hear the differences between the two are in low-frequency notes with somewhat muted harmonics. But there’s a catch: Humans are really bad at hearing low-frequency sounds. In order to hear these notes at equal loudness to higher-frequency notes, you’ll need anywhere from 10 to 40dB of extra power. So those peaks at -87dB in ranges from 20-90Hz may as well be -97 to -127dB, which is outside the range of human hearing. There is no safe listening level to hear the difference between these files.

Cool, huh? It’s always good to know that anyone coming along and telling you that your music collection has to be re-bought because it’s not “high-def” enough is demonstrably wrong. If you’re a budding audiophile, the thing you need to take away from this is to relax: we’re in a golden age of audio here — CD quality is more than fine enough, just enjoy your music! While some may seek higher-quality audio, it’s not necessary if all you want to do is listen to good music.

Thank you for being part of our community. Read our Comment Policy before posting.