All products featured are independently chosen by us. However, SoundGuys may receive a commission on orders placed through its retail links. See our ethics statement.

aptX and aptX HD: Bluetooth audio codecs explained

March 14, 2023

Let’s face it: Bluetooth audio quality has never really been good enough to satisfy the pickiest listeners out there, and a number of competing “high quality” codecs have appeared to fill the void in quality left by the default low-complexity sub-band codec (SBC) found on all Bluetooth headsets. Qualcomm’s aptX is the most commonly supported alternative in both headphone and smartphone products, as it “delivers CD-like audio quality” while aptX HD is “indistinguishable from High Res audio” (according to Qualcomm). Bold claims for Bluetooth technology, but is any of that true?

Fitting audio of any reasonable quality into a small enough package to send over a data-limited connection is an inevitable matter of compromise. Codecs regularly manipulate audio quality to save on bandwidth, which often means distortion, added noise, compression artifacts, and poor quality. So how does aptX achieve its audio quality, and how does it compare to the higher quality aptX HD?

Editor’s note: this article was updated on March 14, 2023, to add more context surrounding the codec family.

What is aptX?

Before we go any further, it’s worth pointing out what aptX actually, y’know, is. Qualcomm has developed a number of Bluetooth codecs in the aptX family for a number of years, including aptX, aptX HD, aptX Adaptive, aptX Lossless, and aptX Low Latency. Each of these are what’s called a codec, or a way for a source device (like a phone or computer) to transmit data to a listening device like headphones. The codec will provide a means for each device to agree on a way to encode and decode the data into a format that can then be used to accomplish its goal—whether that’s data, or audio.

While Sub-Band Codec (SBC) is mandatory for all Bluetooth devices to have as a lingua franca of sorts, not all devices need to be able to use aptX or any of its family. In general, aptX and its related codecs are prized for their audio quality and in some cases latency. However, this can vary depending on which flavor of aptX you’re using. Today, we’re only looking at aptX and aptX HD.

What is frequency response?

The two major factors that determine the size of digital audio files are sample rate (frequency), and bit depth. By removing a chunk of high-frequency content that’s tough for people to hear anyway, cheeky codecs could make major space savings. That certainly wouldn’t count as CD-like quality though, so our first port of call is to check the audio bandwidth.

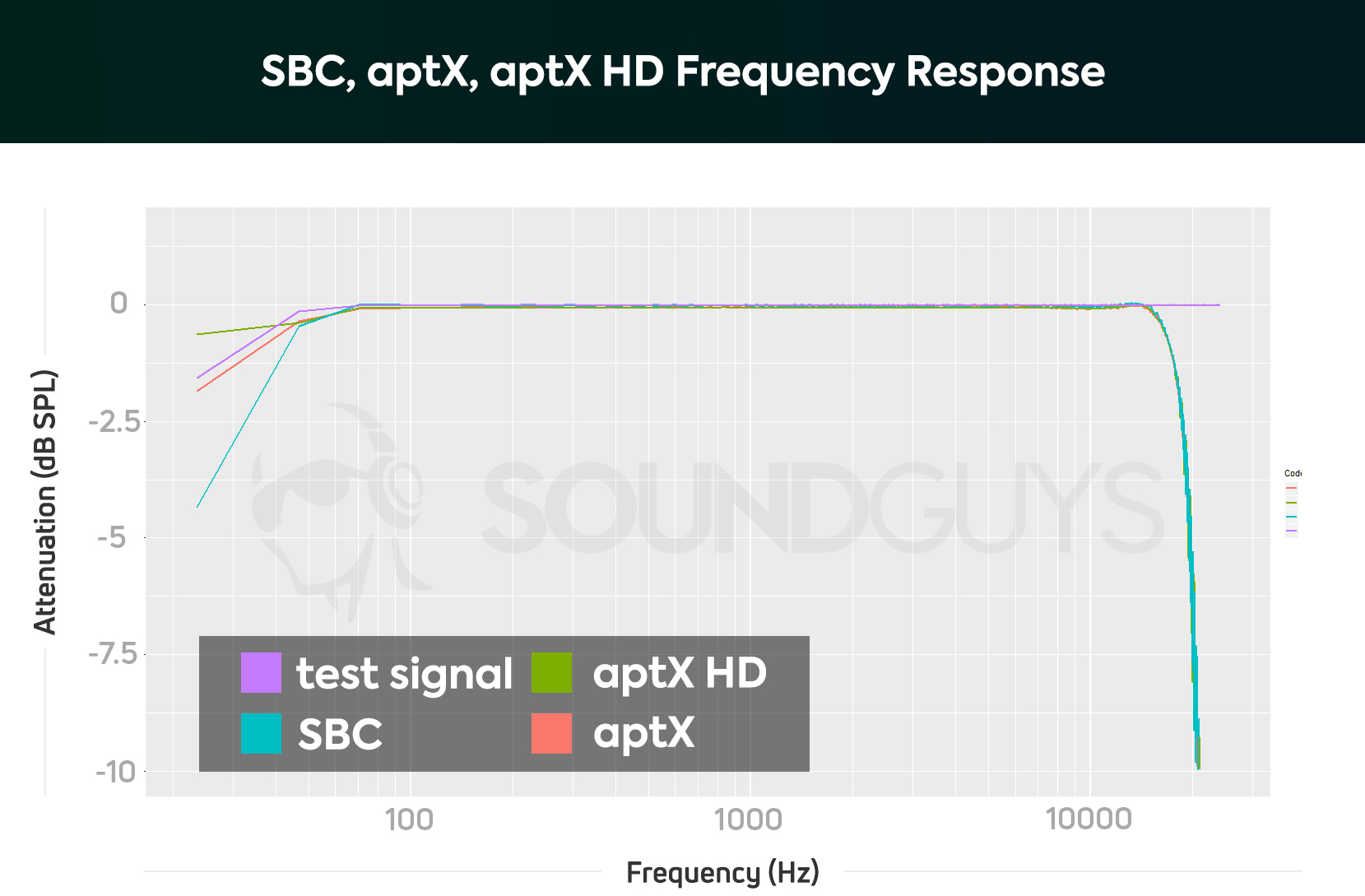

How to read the graph: the graph shows the relative levels of frequencies across the audible spectrum, or the measured “frequency response.” For a 48kHz audio file, an ideal codec passes frequencies from 0Hz to almost 24kHZ without any attenuation (remaining at 0dB the whole way across).

Both aptX and aptX HD share the same basic frequency response, which reproduces virtually all the audible spectrum untouched. This means that your music won’t have any tones that are inexplicably changed by the Bluetooth link. As expected, neither codec handles 96kHz files, and are both capped at 48kHz.

The codec applies a low pass filter to the audio with minimal ripple and a corner frequency around 18.9kHz. This is right up against the limits of human hearing, and therefore preserves practically the entire range of musical content in the original file. So far, so good on the claims of providing sound that’s indistinguishable from CD quality.

This frequency response is a very close match for the default SBC Bluetooth codec supported by all devices, which also extends right up to 44.1/48kHz. The only noticeable difference in the measurement is slightly more ripple in the SBC passband at very high frequencies. This ripple could indicate more high-frequency distortion than aptX, so we’re going to have to dig down deeper to discern the differences.

How do noise and distortion affect aptX and aptX HD?

Bluetooth codecs make most of their bandwidth savings by splitting audio into multiple frequency bands (aptX uses four) and reducing the resources available to store the data. In other words, codecs like aptX don’t waste time with sounds you can’t hear.

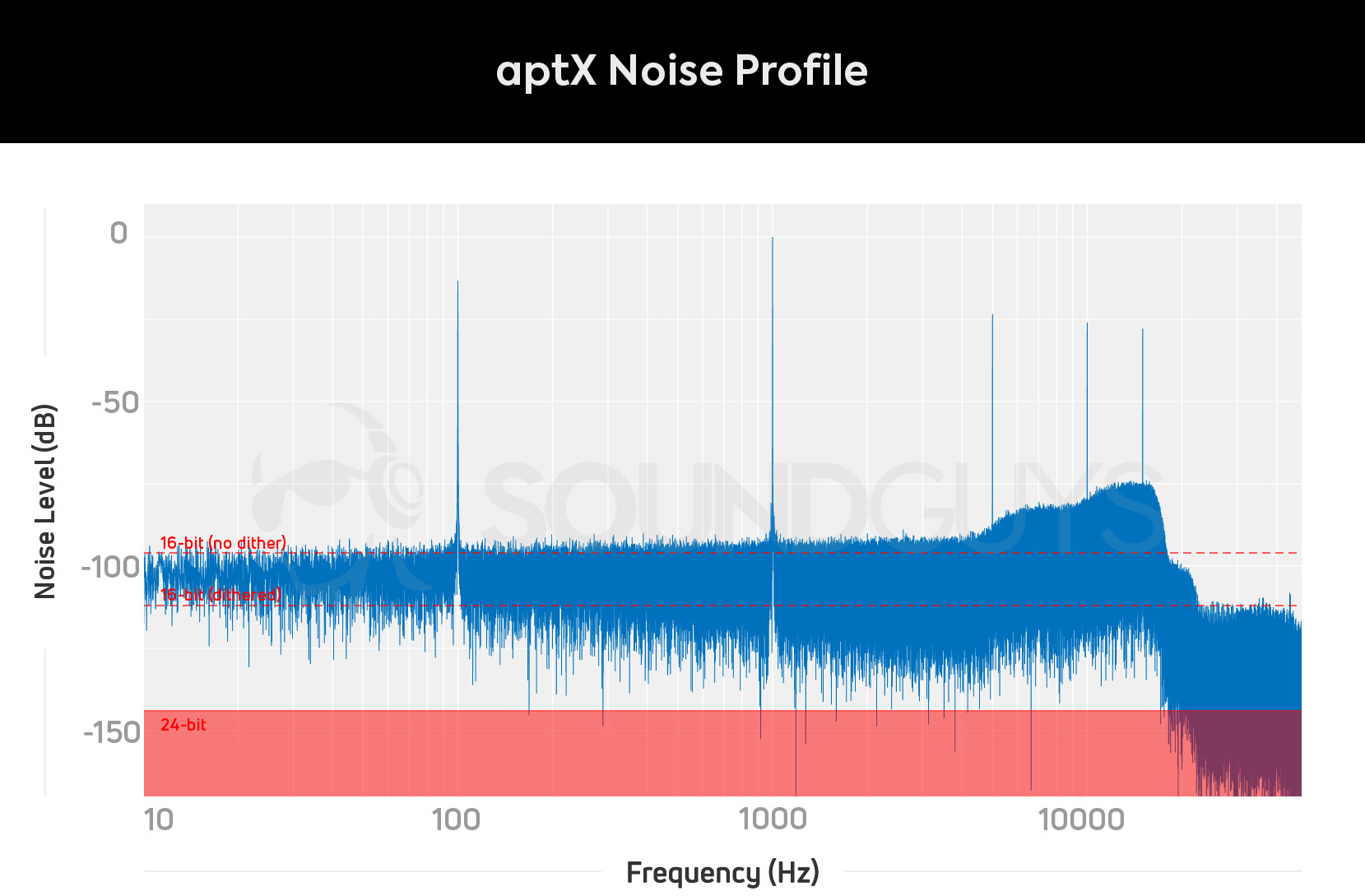

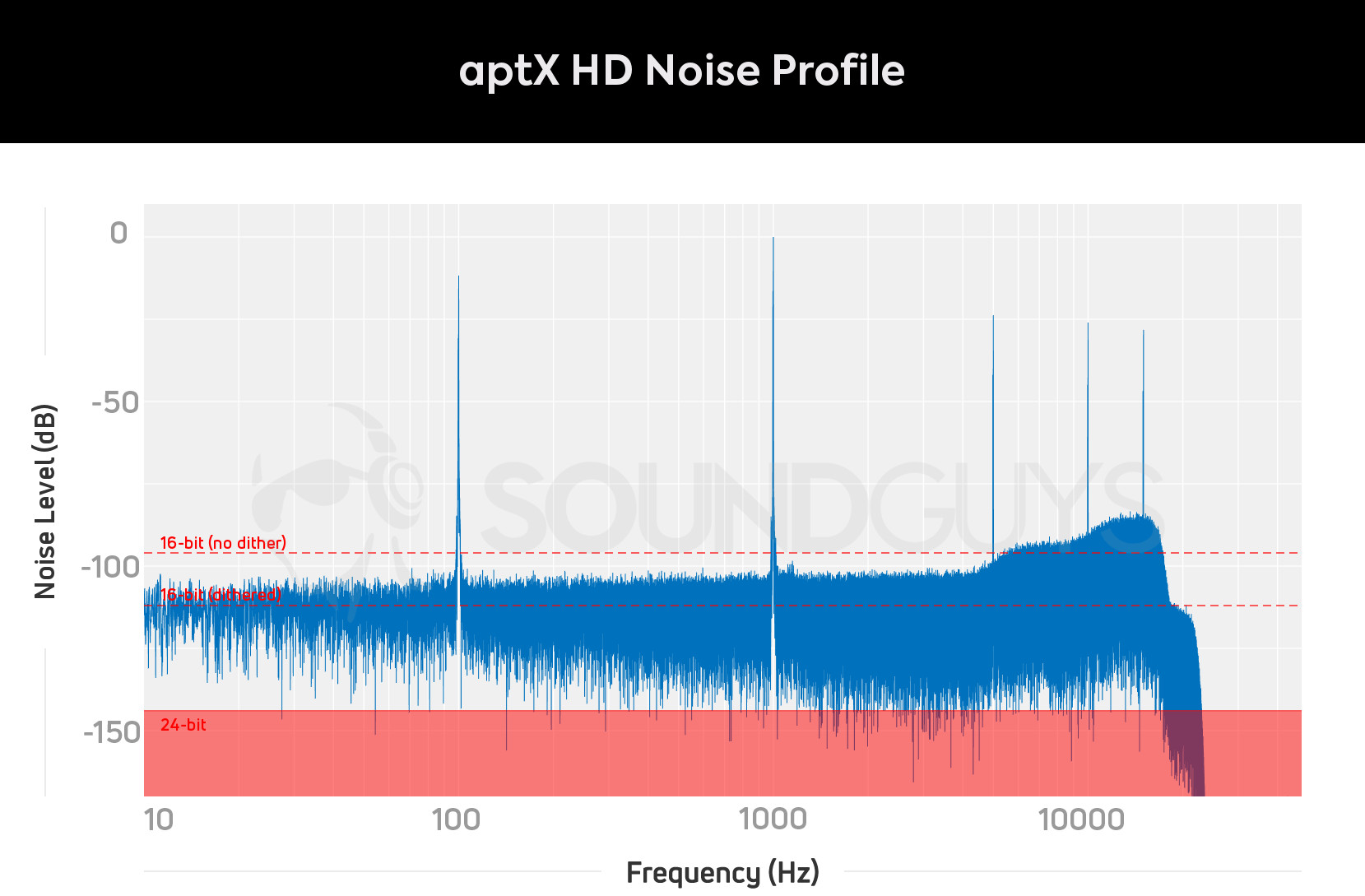

If you’re unfamiliar with the concept of noise floor and quantization, it’s really the same thing as talking about bit depth. Noise and bit depth both refer to the signal level where the music we want to hear becomes indistinguishable from the background electronic system noise, or in this case the codec encoding method. The lower the noise floor, the finer the details that can be reproduced, and the higher the quality. A 16-bit signal is capped at 96dB of dynamic range, 16-bit with dithering clocks in at around 112dB, and 24-bit allows for a maximum of 144dB. However, the very best hardware won’t give you more than about 124dB of capture, and 90dB is more than enough for headphone listening volumes.

With that out of the way, here’s the plot of aptX and aptX HD noise versus frequency. How to read the graph: Lower amplitude values are better across all frequencies and should ideally be flat. Ignore the spikes – these are the test tones. Instead, focus on the top of the noisy wiggling bit of the graph at around -100dB. The dotted red line is the theoretical dynamic range of CD-quality files, and the red area is the limit of 24-bit audio.

aptX and aptX HD’s noise shapes look very similar. You can see that 20Hz to 5kHz offers approximately 90dB of range with aptX, and 102dB of range with aptX HD. At around 4-8kHz, the amount of noise increases to approximately -80dB with aptX and -92dB with aptX HD. Finally, the highest frequencies between 8kHz and 20kHz see yet more noise still. This clocks in at approximately -74dB for aptX and -86dB for aptX HD. Neither of the two codecs introduces any obvious harmonic distortion to the test tones.

This variable noise floor is a result of aptX’s variable bit rate sub-band encoding. The codec uses fewer bits at higher frequencies, resulting in more noise. The only difference between aptX and aptX HD is that the latter adds two extra bits of information across all of the codec’s frequency bands, giving you a 12dB improvement to the noise floor. This is great for subtle, high-frequency detail—which might get lost under the high noise floor with regular aptX.

So why would you want to use a variable bit depth like this? Your ears, which differ in sensitivity at different frequencies, are most sensitive at 1kHz—lessening from 4kHz up to 8kHz, and then losing most hearing ability somewhere between 10kHz and 20kHz (depending on the age and health of your inner ear). It’s not a coincidence that the aptX noise floor matches this pattern. Science tells us that your ears are less sensitive to noise at high frequencies, making it a smart place for Bluetooth codecs to save on some bandwidth.

16 vs 24 bit: What’s the difference?

The topic of noise floor nicely circles back around to the codec specs. aptX’s -90dB noise floor is close to 16-bit audio but isn’t quite a technical match to a properly dithered CD track. Although at real world listening volumes the audible differences are going to be negligible, so Qualcomm’s claims of “CD-like” quality appear to stack up.

aptX HD supports 24-bit audio, yet it’s clear that the noise floor doesn’t extend low enough to leverage the full capabilities of a 24-bit track, especially in the high-frequency bands. In fact, dithered 16-bit audio’s -112dB noise floor extends further. Whether aptX HD is “indistinguishable from Hi-Res” is a touchier issue, as there isn’t time to get into the actual differences between CD and Hi-Res here. Suffice to say that it takes a pretty good stab at covering the limits of human audio perception. With that in mind, 16-bit audio files should benefit from the lower noise profile of aptX HD too.

...it’s clear that the noise floor doesn’t extend low enough to leverage the full capabilities of a 24-bit track, especially in the high-frequency bands.

I confirmed this with a test, which shows the margin of error differences in the noise floor between a properly dithered 16-bit and a Hi-Res 24-bit file when played using aptX HD. However, both files benefit from the additional noise floor and the extra headroom at high frequencies. If you have aptX HD capable gear, it’s the better codec even if your audio library consists of 16-bit files.

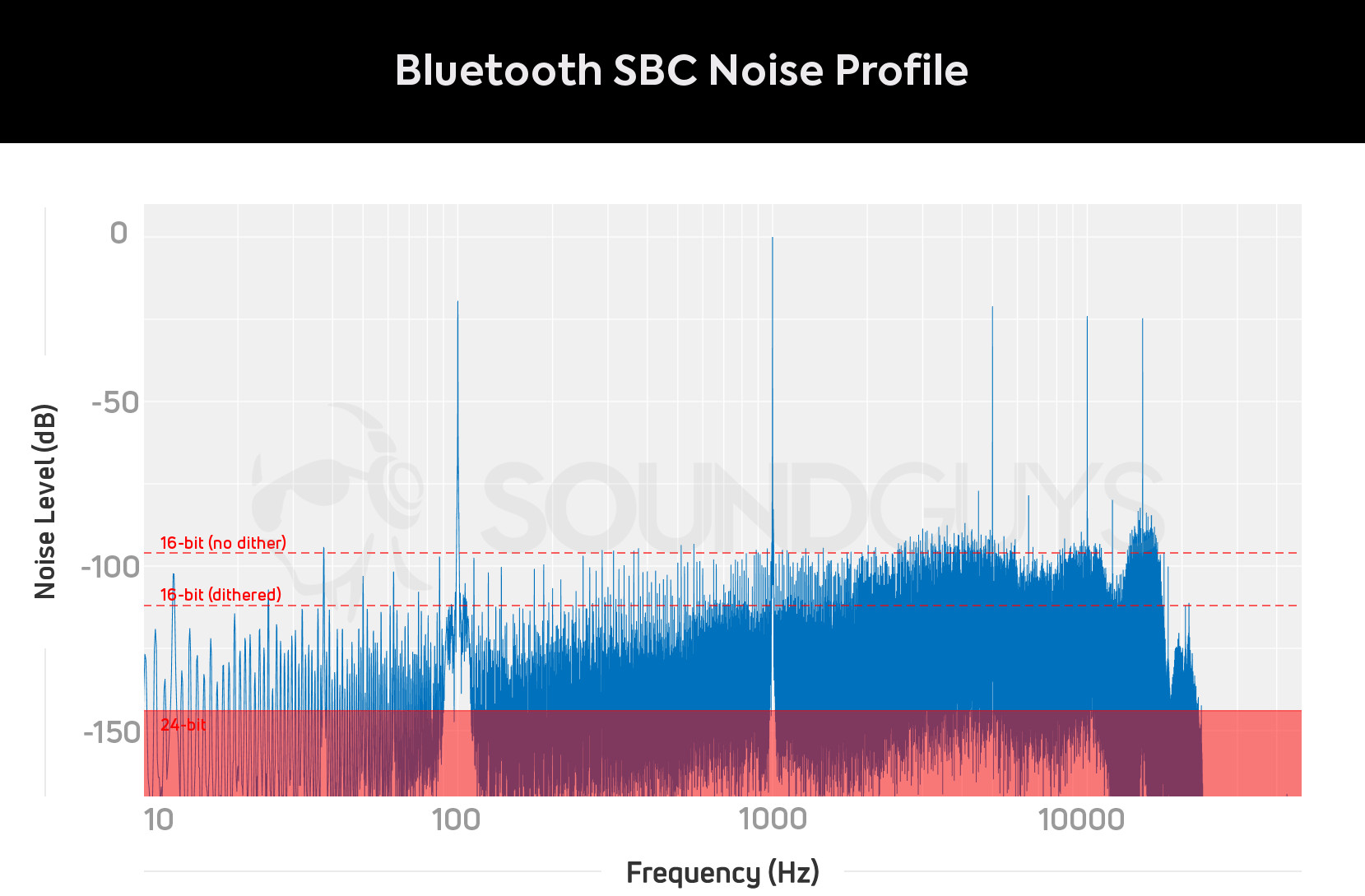

Is aptX or aptX HD better than Bluetooth’s default SBC?

SBC frequency range is very similar to aptX, with a similar roll-off occurring just before 19kHz. The biggest difference is in noise floor and high-frequency distortion. SBC is comparable to aptX at lower frequencies, but notably worse performing than aptX HD. However, SBC begins to creep up its noise floor a little earlier at 2.5kHz compared to 5kHz for Qualcomm’s codecs. The noise floor for music below 2.5kHz is -92dB, a match for aptX—but certainly not quite as good as aptX HD’s -102dB. SBC’s noise floor raises to -85dB between 2.5-5kHz and 8-11kHz, falls back to -90dB between 5-8kHz, and reaches highs of -80dB above 14kHz.

SBC uses a larger number of sub-bands (between 4 and 8 depending on the version used), which allows for this more variable noise floor. SBC actually maps the contours of human hearing sensitivity better than aptX does, because it uses more bands.

However, the test shows a number of additional peaks in the high frequencies. Given that they stand so isolated, this doesn’t appear to be general noise. Instead, this is harmonic and intermodulation distortion—something we really want to avoid in high-quality audio systems and codecs. Neither of the aptX codecs exhibits the same issue.

SBC exhibits mixed signal distortion, aptX does not.

To test this further, I also ran a traditional intermodulation distortion and a 1kHz square wave test across both codecs. The IMD results confirm that SBC struggles with mixed frequency content, producing a number of notable spikes of distortion, while aptX does not. The 1kHz square wave test did not show similar anomalies, so there’s clearly an issue with mixed high and low-frequency content with SBC.

It’s important to remember that SBC comes in a variety of possible configurations and levels of quality. While our flagship smartphones all performed reasonably well, other devices using older or lower-cost Bluetooth components may use higher levels of compression and exhibit more distortion and worse noise performance.

Ambiguity is one of SBC’s greatest weaknesses as an audio codec for music. Besides the improved quality, aptX’s greatest strength is its consistent performance.

There is a lot more to comparing codec sound quality than the basic signal level objective measurements carried out here. To really reach any conclusions about which actually sounds better and for which types of program material (music), would require a large-scale controlled blind listening test—which we don’t have the resources for right now—and would certainly be an interesting area for future exploration.

Implications for aptX Adaptive

Building on aptX and aptX HD, Qualcomm’s latest codec is aptX Adaptive, which continues the push for audio quality. We haven’t run comparable objective measurements on that yet, but we can infer some implications based on these results and what we know about aptX Adaptive.

Essentially, the Adaptive codec scales in quality between aptX Classic and HD, depending on the radio frequency environment and content playing. We have observed that the only difference between Classic and HD is the noise floor profile due to the sub-band bit-depth differences. aptX Adaptive is designed to scale between these quality settings on the fly.

Overall, Qualcomm appears to have a solid Bluetooth audio codec in its aptX line-up. The claims of CD-like quality stand up to basic scrutiny, but true Hi-Res over Bluetooth remains a ways off. Meanwhile, the biggest benefit with the move to aptX HD turns out to be its high-frequency fidelity, preserving those subtle shimmers and harmonics that serious music lovers hate to miss out on.