All products featured are independently chosen by us. However, SoundGuys may receive a commission on orders placed through its retail links. See our ethics statement.

SoundGuys content and methods audit 2021

April 9, 2021

It’s spring, and it’s time for some cleaning up around these parts. Coming off our efforts to solve a few issues with our content and methodology last year, we thought we’d keep the practice going, if only to let people know how things are unfolding.

Since addressing the issues we found last year, we did note a few common themes in our user-submitted FAQ that merited attention, and a few upgrades we were able to make. Our team, site, and content have changed quite a bit since early 2020, so let’s take a look at where things currently are and where it’s all going.

We updated our testing apparatus

For headphones, we upgraded from less than ideal testing fixtures and replaced them with one Bruel & Kjaer 5128. Consequently, we’re now more confident in our measurement quality, so we’re going to start adding a bunch of measurements to our standard reporting as we get to dig into the data more.

We settled on this head for a number of reasons—mainly that its electronics and anthropomorphic ear do a much better job at approximating how an actual human head would behave listening to headphones. Most test fixtures use a straight cylindrical tube to simulate an ear canal which… isn’t ideal. You can correct for all sorts of stuff with math, but you’ll run into issues if the physical chamber you’re measuring doesn’t match the one you need (or at least come close), and the same is true of ear canals.

https://www.soundguys.com/introducing-the-newest-soundguy-the-bruel-kjaer-5128-45973/

The downside is that this head is so new, so there’s a dearth of target curves adapted for it currently. Standards like the Harman target haven’t quite yet been cobbled together for this particular fixture.

However, a target curve isn’t always the best way to figure out what you want to know. Essentially, when you weigh a response against a target curve, you’re attempting to calculate the probability that your envisioned listener will like whatever device you’re testing. Our envisioned listener is actually over 1 million people a month, and since all of you have different bodies, your experience may vary from our targets—That’s okay! We’re more concerned with finding the best way to serve the most people.

Additionally, many testers who use this particular head have a lot of trouble fitting in-ears to it. To that, I say: “Gee, no kidding. It’s a head with a realistic ear canal, the heck did you expect?” Of course, that’s rude and unnecessary, because most people are simply looking for reliability in their test fixtures for data integrity. We’re a review site, so it matters a great deal if it’s difficult to get a good fit with anything, or if you can expect some performance wonkiness from that issue. So we’ll collect that data and report it.

Solution: house standards for the testing process

Because of these issues, we’re developing two major updates to our testing:

- A way to ensure if in-ears are fit as best they can with these ears before testing, or at least the best a normal person can reasonably expect.

- We’re going to be adapting our own house curve loosely based on research done by Bruel & Kjaer in the 1970s, and Dr. Olive and the Harman team in the 2010s.

Of course, your ear canals are likely going to be shaped differently than those of our dummy head, but they’re much closer than a straight cylindrical volume of air. Consequently, you can expect minor variations from our charts to what your head would be like, but our equipment can get it much closer than other fixtures could. While our house curve will be another variable, that will only be used for our scoring—so if you’re comfortable letting the measurements do the talking you’ll be able to see everything we do in upcoming content.

We updated our charts

To telegraph which measurements are taken with our new setup, we’ve changed the aesthetic of our charts to something that’s starkly different. Instead of having a white base, we now use a black one to show measurements taken with our new setup.

We also noticed that our isolation and attenuation charts used colors that were difficult for our readers with deuteranopia (a type of color blindness) to read, so we’ve changed the ANC response to a dashed line instead of a solid cyan one. This way, it’s easy to pick out which line is which without color cues.

To our readers: if you see a way we can improve the accessibility of our content, please contact us on Twitter or by email!

We updated our microphone and speaker testing

We also updated our microphone and speaker testing to reflect the upgrades in our headphone testing as well. To this end, we’ve added a much more exacting test microphone to our setup that allows us to make more granular measurements, and upgraded equipment to support it.

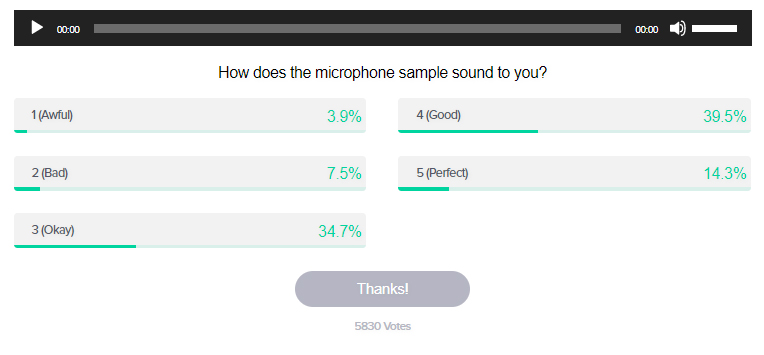

However, for microphone units in headphones we’ve changed our scoring methodology. You might have seen those polls accompanying mic samples on our reviews in the past, but they’re now on all of our new reviews.

Instead of scoring the microphones on headphone models, it will follow what our readers rate these mic samples with. While this may be a little controversial, we feel mic performance in these products should be assessed in a manner appropriate to how they’re going to be used. In this case, these mics will primarily be used to talk to others on the phone or in a remote meeting while others are listening on their headphones. Consequently, we feel they should be judged in aggregate using the same things your average user would be using to judge quality.

Philosophy is everything

Where we diverge from many other audio sites is our philosophy. While we all say that we want to help you buy the right audio products, most every outlet has a different method of getting you an answer. It may not surprise you to hear that different types of consumers want different information, and there’s no real reliable way to satisfy everyone at once.

We don't want our readers to get lost or feel confused when they're looking for a certain piece of information, because that defeats the whole purpose of what we do.

We recognize that not everyone’s going to be able to dig deep into measurements and specs, so we do our best to ground descriptions in concrete examples that most anyone can experience. Even though our reviews are quite long, we don’t want our readers to get lost or feel confused when they’re looking for a certain piece of information, because that defeats the whole purpose of what we do.

However, keeping things this simplistic often leaves some of the more passionate enthusiasts behind. We aren’t going to tailor our content to leave the masses behind either, so rather than change how we do things we’re just going to add new content on top of what we already do. For that, we’re looking at adding posts that accumulate all the measurements we take and what each means for you. We don’t expect these pages to get a lot of traffic or to be as interesting as the main reviews, but it’s still good for completeness’ sake.

We’re on to 2022

This isn’t everything we’ve updated, and there will likely be more things that crop up as we expand our team (and coverage). If you’re curious about our process, how we do things, and why, be sure to stick around for more features regarding this very topic.